I explained the current PATCH methodology in my previous post. Today I’m going to do a deep dive into how PATCH views the current teams in the EPL. Here’s what the table looks like (pre-Southampton on Saturday):

| Team | PATCH |

|---|---|

| Chelsea | 2.84 |

| Manchester United | 2.79 |

| Liverpool | 2.73 |

| Tottenham Hotspur | 2.70 |

| Manchester City | 2.68 |

| Bournemouth | 2.64 |

| Arsenal | 2.61 |

| Southampton | 2.45 |

| Leicester City | 2.38 |

| Aston Villa | 2.34 |

| Norwich City | 2.28 |

| Watford | 2.27 |

| Palace | 2.27 |

| West Ham United | 2.27 |

| Everton | 2.22 |

| Swansea City | 2.18 |

| Stoke City | 2.13 |

| West Bromwich Albion | 2.11 |

| Newcastle United | 1.97 |

| Sunderland | 1.88 |

The values for PATCH are the 60th percentile of all performances for each team. You could, if you were highly motivated, work out the actual units for PATCH, but treat it as abstract. 2 is around average, somewhere just under 4 is the 90th percentile amongst player performances and 5+ would be outstanding. Given that, I am reasonably happy with how this shapes up.

PATCH Correlations

At first glance the numbers above don’t look bonkers, but how does the metric correlate with other team defensive stats? Let’s have a look:

| Percentile | GA | xGA | Shots Against | SoT Against |

|---|---|---|---|---|

| 10th | 0.06 | 0.10 | 0.08 | 0.06 |

| 20th | 0.22 | 0.30 | 0.20 | 0.15 |

| 30th | 0.28 | 0.44 | 0.38 | 0.30 |

| 40th | 0.29 | 0.56 | 0.54 | 0.44 |

| 50th | 0.27 | 0.61 | 0.63 | 0.45 |

| 60th | 0.27 | 0.71 | 0.76 | 0.55 |

| 70th | 0.19 | 0.65 | 0.71 | 0.48 |

| 80th | 0.16 | 0.60 | 0.62 | 0.38 |

| 90th | 0.10 | 0.47 | 0.50 | 0.29 |

Those are the R2 values each team’s PATCH values at a certain percentile (10th being the lowest 10%, i.e. worst defensive performances), compared to some traditional measures. It’s great to see that we’re nicely correlated with expected goals against and shots, though I should point out that shots do directly go into the calculation – if you allow a shot through your territory, it’s marked against you. However, that’s only a small proportion of the gains measured. I tested with shots removed from the ball progression metric just to be sure and the correlations barely went down.

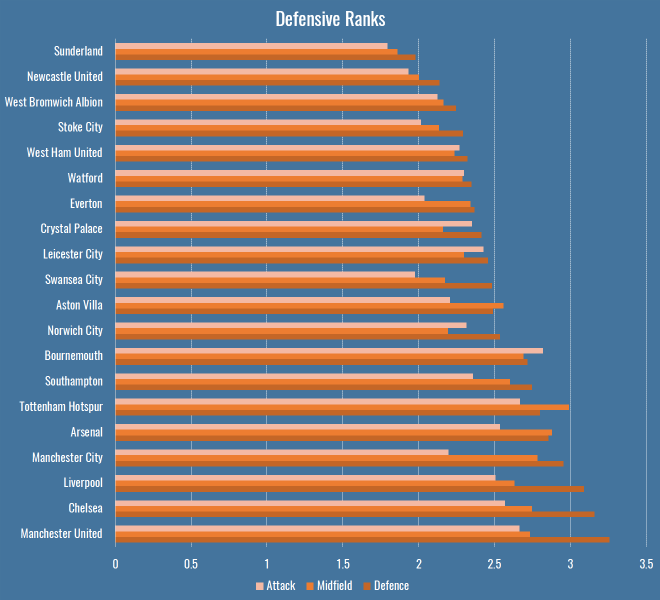

Defensive Ranks

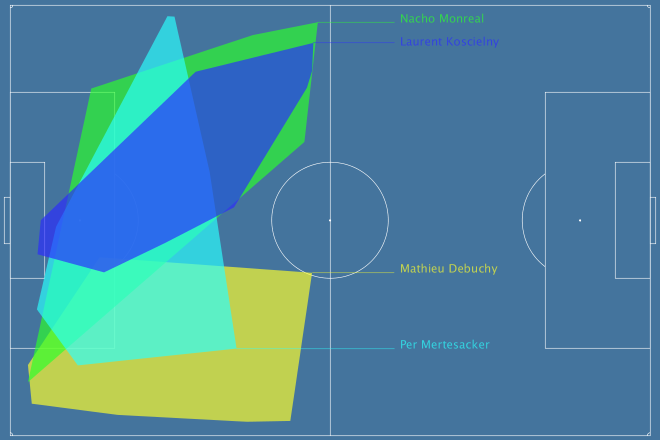

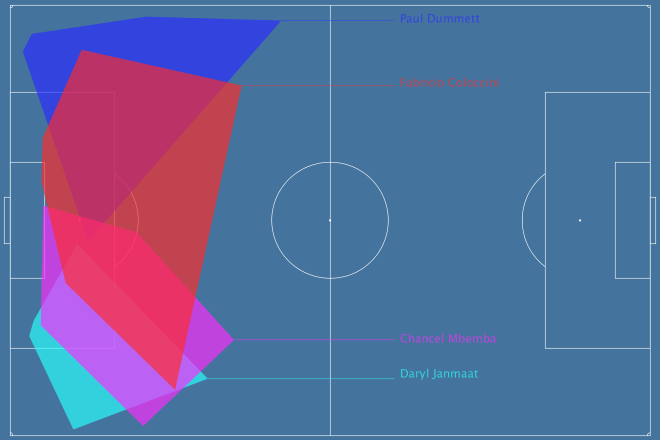

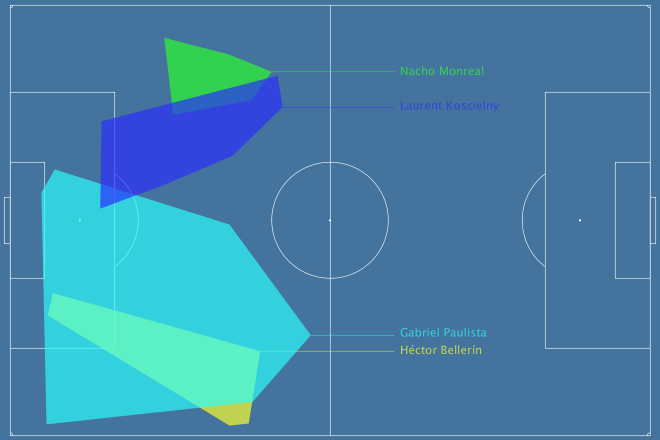

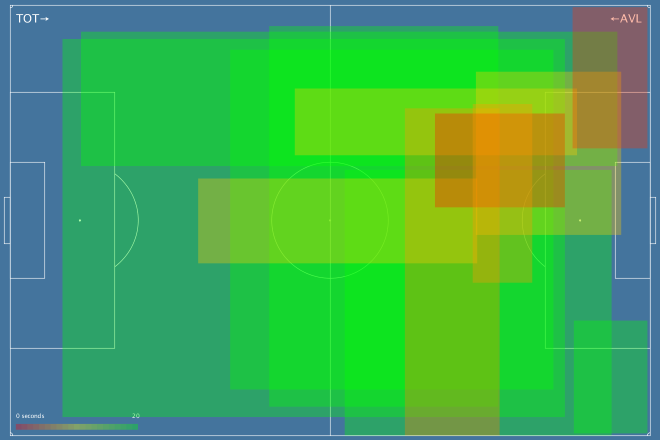

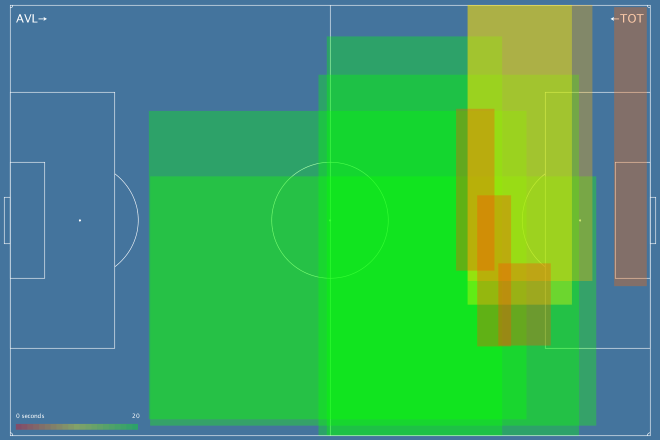

So far we’ve only looked at team’s performances en masse, as measured by PATCH. This is what things look like if we break them down by rank in a team’s formation:

There are a few interesting patterns that immediately jump out:

- Bournemouth’s attacking midfielders and forwards are doing a bunch of defensive work.

- Manchester City’s less so.

- Tottenham have the least penetrable midfield of any team in the league.

- As you might expect, Leicester’s attack and defence are a little more robust than their midfield, reflecting the fact that they press high then retreat low.

Lingering on these numbers a little longer, I thought I’d compare these numbers to someone else’s model for a further sanity check. Mark Thompson of Every Team Needs a Ron is one of my favourite writers, and is devoted to studying defenders in all their forms. He has a system to analyse how teams convert possessions into attacks, and attacks into shots, and how they allow their opponents to do the same. I compared the defensive rank data above with his data to see what the correlations were:

| Defence | Midfield | Attack | |

|---|---|---|---|

| Attacks per Possession | 0.65 | 0.47 | 0.40 |

| Shots per Attack | 0.46 | 0.53 | 0.38 |

So, comparing the Attack, Midfield, Defence PATCH values from the graph above to Mark’s Attacks per Possession and Shots per Attack, we can get an idea of how much different parts of a team contribute to breaking up attacks. Defensive PATCH values explain 65% of the variance in opponent attacks per possession, whereas midfield is a much lower 47%. This makes some sense, while a lot of teams would love their midfield to quash potential attacks before they happen, it’s far more common that they make it throught to the last line. What’s interesting is the second row, where midfield performances explain shots pre attack better than defence. Again I wonder if this is bad shot quality – the defence don’t (and often don’t want to) stop low-expectation long shots. However if your midfield are putting in a good screening performance, attackers won’t even get the space for bad shots.

That’s one explanation, anyway. At the very least I’m happy to see a decent correlation with someone else’s model.

Patchwork Defences

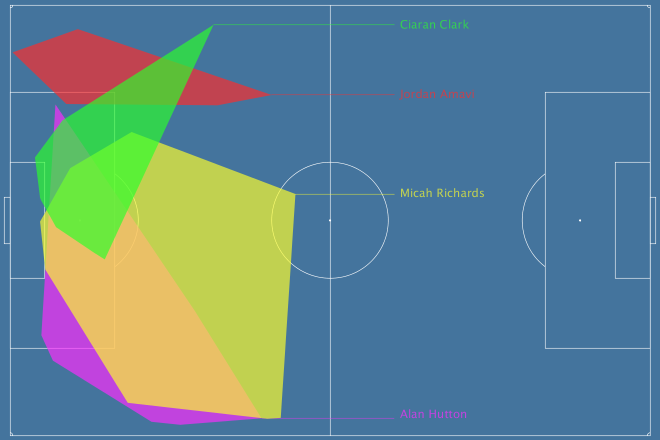

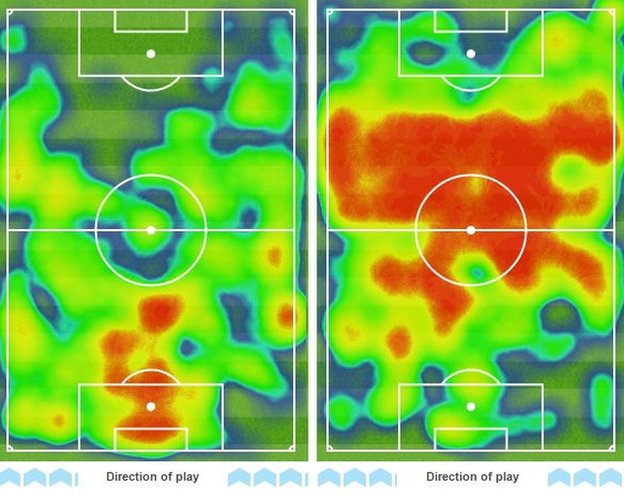

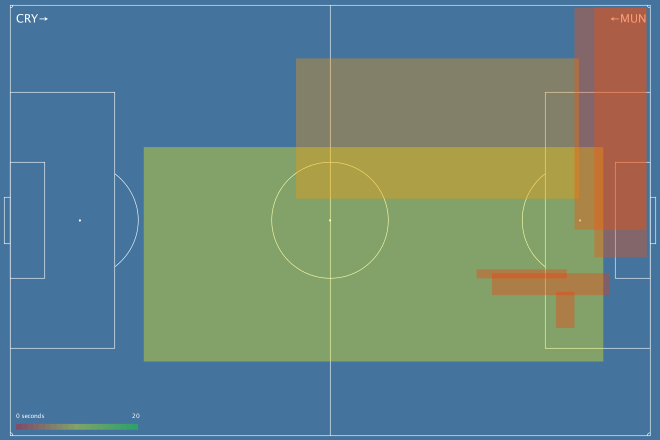

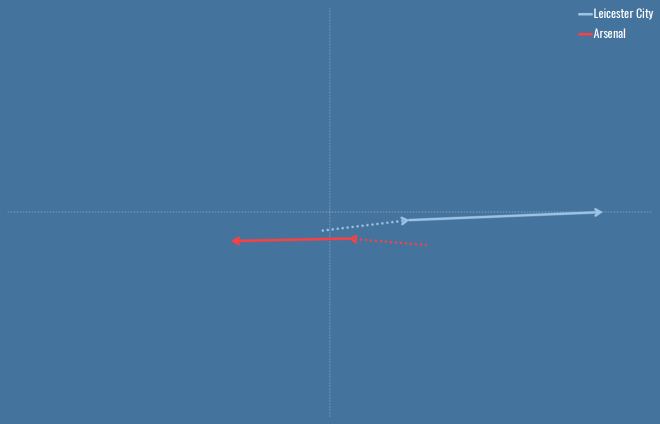

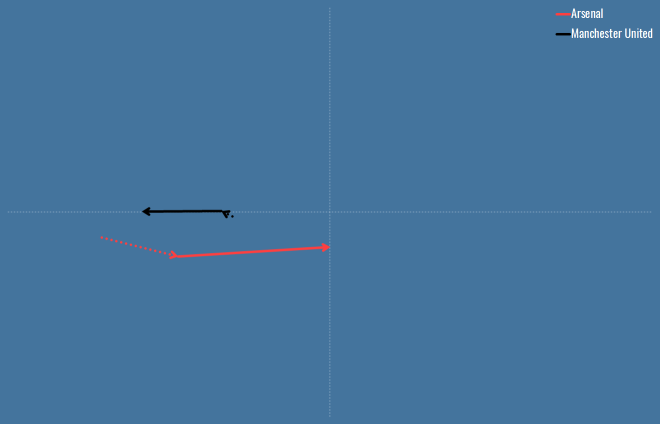

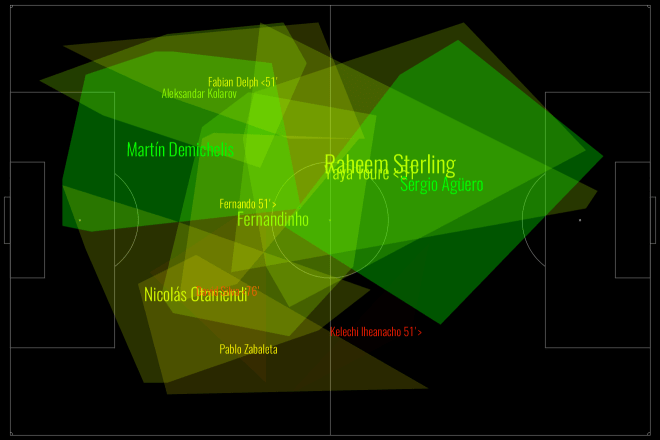

Defences are more than the sum of their parts. There are plenty of games where teams in aggregate can put in a great performance in terms of total or average PATCH values, but still be torn apart on the field. This happens often because of mistakes, which PATCH will probably never be able to account for, but it also happens because of weak links that let down the greater whole. Have a look at Manchester City from this weekend’s absolutely definitive title decider against Leicester:

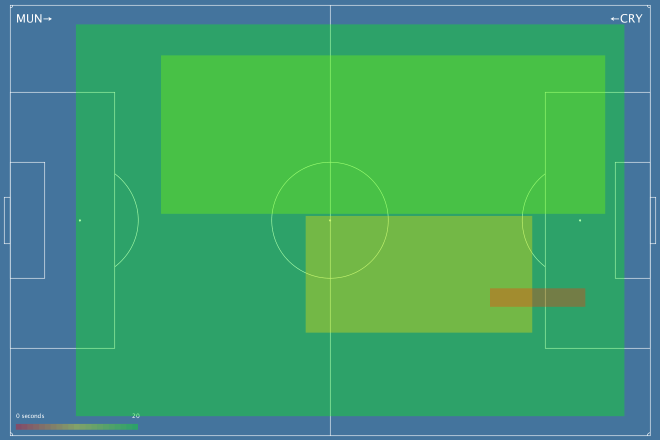

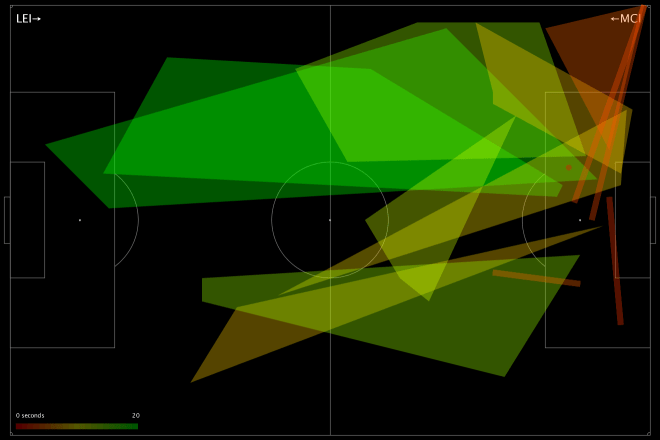

This is a fairly green chart – City policed a lot of territory, and in various parts of the pitch prevented Leicester from making regular gains. But look at their right-hand side: Otamendi didn’t score especially highly, and Zabaleta (who seems to be pushing quite far forward) scored even worse. Teams rightly fear Leicester’s right wing, because that’s where the formidable Mahrez nominally takes the field, but here we saw Mahrez pop up on the left a few times, including for Leicester’s 2nd goal, and Drinkwater also made some penetrative passes. We can see this from Leicester’s attacking chart for the day:

Very left leaning, basically nothing on the right. Despite the fact that City conceded twice from set-pieces, you still saw scare after scare from open play. The combination of a weak defensive right-hand side, and players taking higher positions than was perhaps advisable against the league’s fastest counter-attacking team (still 2nd in Europe after Caen), meant that good PATCH scores in many parts of the pitch did not necessarily add up to a good defensive performance.

Weak Links

Given what we saw in the Man City vs Leicester game, perhaps we should judge a defence by its weakest link? After all, if they’re allowing lots of ball progression in their area, that’s obviously where the opposition are attacking, whether or not they’re thinking of that player as exploitable. If we just look at the lowest score for a defender in each game (using just those with 90+ minutes in a game to be safe), this is what teams come out looking like:

| Team | Mean Weak Link PATCH |

|---|---|

| Chelsea | 2.32 |

| Manchester United | 2.06 |

| Arsenal | 1.99 |

| Manchester City | 1.97 |

| Liverpool | 1.78 |

| Aston Villa | 1.77 |

| Leicester City | 1.74 |

| Tottenham Hotspur | 1.74 |

| Southampton | 1.74 |

| Bournemouth | 1.68 |

| Swansea City | 1.62 |

| Norwich City | 1.61 |

| Crystal Palace | 1.59 |

| West Bromwich Albion | 1.58 |

| Watford | 1.54 |

| Everton | 1.53 |

| West Ham United | 1.48 |

| Stoke City | 1.48 |

| Newcastle United | 1.45 |

| Sunderland | 1.45 |

Nothing radically different here, perhaps I should be a little uncomfortable seeing Villa that high, but they have save percentage and shot creation issues, not necessarily an awful defence. That said, these numbers correlate less well with each of the four measures we compared to earlier, so it seems less representative.

Total Territory

PATCH fundamentally rewards defenders for claiming territory, so lets look into any team characteristics can we pick up from looking at their territory as a whole. Who uses the most space? Who leaves the most gaps?

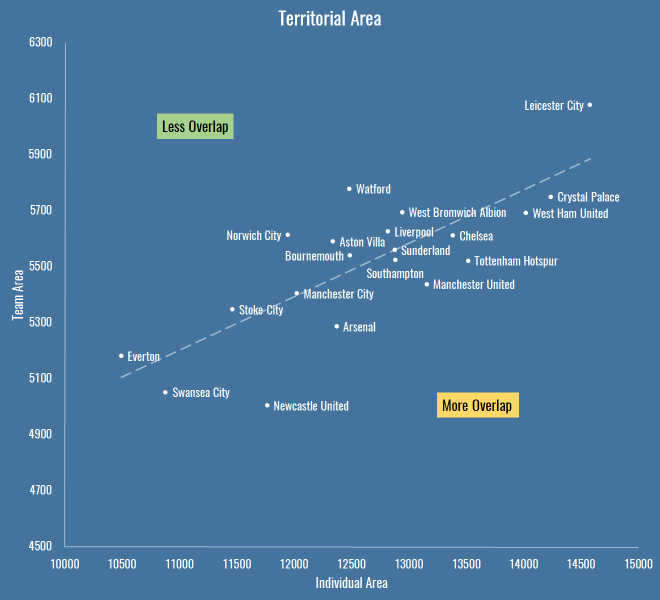

This is total area per game of players’ defensive territory for each team, measured first as the sum of individual areas, then as a merged team area:

| Team | Total Individual Area | Team Area |

|---|---|---|

| Arsenal | 12375 | 5286 |

| Aston Villa | 12338 | 5590 |

| Bournemouth | 12487 | 5540 |

| Chelsea | 13385 | 5612 |

| Crystal Palace | 14240 | 5750 |

| Everton | 10494 | 5181 |

| Leicester City | 14580 | 6078 |

| Liverpool | 12817 | 5626 |

| Manchester City | 12025 | 5405 |

| Manchester United | 13156 | 5438 |

| Newcastle United | 11767 | 5003 |

| Norwich City | 11949 | 5614 |

| Southampton | 12885 | 5525 |

| Stoke City | 11464 | 5347 |

| Sunderland | 12880 | 5560 |

| Swansea City | 10882 | 5052 |

| Tottenham Hotspur | 13520 | 5522 |

| Watford | 12482 | 5779 |

| West Bromwich Albion | 12943 | 5694 |

| West Ham United | 14022 | 5693 |

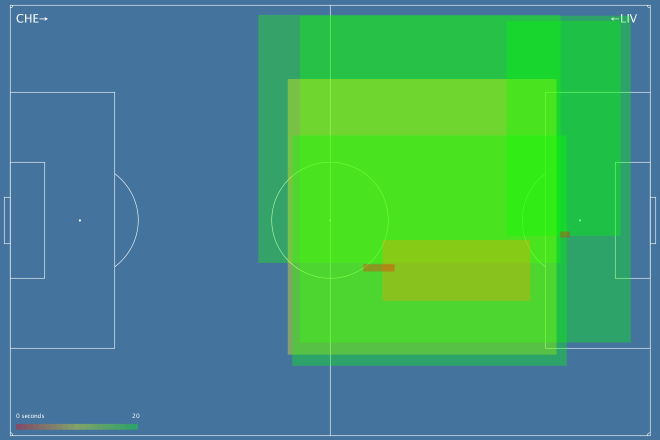

Which looks like this:

The X axis is the total individual area, which includes overlaps between players. The Y axis is the team shape, the area you get when you merge all the individual territories together and forget overlaps – also worth noting that the lower this value, the more empty spaces the team is leaving on the pitch.

It’s interesting because it reveals teams that are quite expansive in their defensive efforts (to the right are basically the pressers and aggressors, to the left is… Everton, asking very little of its defence). It also shows teams that have an overall compact defensive shape (Newcastle) versus those that are push up more (Leicester, Watford). Above the trend line are teams with less overlap, below are those that are more crowded when defending.

If we apply a similar sort of calculation to PATCH, we can take a team’s area and judge them not by the progression they allow through their territory, but by the progression that happens outside it. If we do that, these are the numbers we see:

| Team | Outside Territory PATCH |

|---|---|

| Manchester City | 24.09 |

| Liverpool | 23.55 |

| Norwich City | 22.78 |

| Southampton | 22.04 |

| Leicester City | 20.77 |

| Watford | 20.75 |

| Tottenham Hotspur | 20.59 |

| Aston Villa | 20.22 |

| West Bromwich Albion | 20.19 |

| Crystal Palace | 19.37 |

| Bournemouth | 19.35 |

| West Ham United | 19.26 |

| Chelsea | 18.34 |

| Manchester United | 17.94 |

| Arsenal | 16.98 |

| Swansea City | 16.51 |

| Stoke City | 16.36 |

| Sunderland | 15.96 |

| Everton | 13.31 |

| Newcastle United | 11.28 |

So Man City, Liverpool and… Norwich (apparently) allow the least progression outside their territory. Newcastle and Everton leave the biggest gaps for opponents to operate inside.

Getting Goal Side

Above you saw how a lot goes on in empty spaces. The thing that worries me most about PATCH, and particularly the approach I’ve taken to trimming events for territory, is space behind a defender. Perhaps we should leave in all goal side events for a defender? Even more, should we project their territory back to the goal line, or even the goalmouth itself?

Well, you’re going to have to wait to find out. In my next post I’m going to finally get around to looking at some individual player scores, and I’ll experiment with how defenders should be blamed for goal side events then.