Today we’ll look at players in Europe through the lens of my PATCH defensive metric. If you can’t be bothered trawling through an entire post to understand the method, you only really need to know this: PATCH measures how much a defender prevents their opponents from advancing the ball through their territory. Clearly that leaves lots of information about defenders and defences in general on the table, but you’ll have enough information by the end of this post to bash me over the head with specific examples of players you think it’s misjudging. In fact, I’ll even help you out along the way and spell out all my worries about the metric, and the things I think it ought to do in the future.

That said, in PATCH’s defence, it has some nice characteristics:

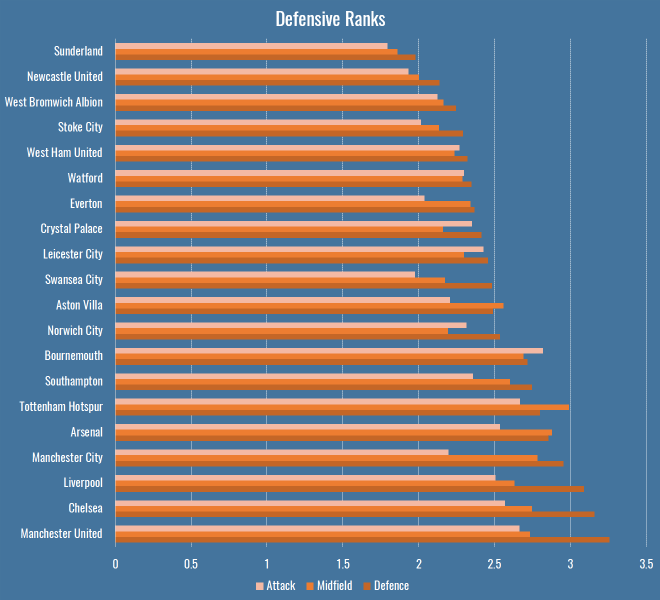

- A team’s medianish PATCH score correlates pretty well with shot numbers conceded by a team over a season, at around 0.7.

- It correlates slightly better with xG conceded by teams, at around 0.75.

- It persists year-on-year for teams, correlated at around 0.6.

- A player’s median PATCH persists year-on-year at around 0.3.

But putting numbers aside, let’s see how you feel about some individual player values. If you look at the standard deviation of median PATCH values by minutes played, you can see things settle down at around 600 minutes:

That’s because at very low values you get some weird outlying games, where players haven’t had any opponents in their territory and so score very highly. Just to be safe, we’ll set a cutoff a little higher, so here are the top European centre-backs with more than 900 minutes this season:

| Competition | Team | Player | PATCH |

|---|---|---|---|

| Italian Serie A | Fiorentina | Gonzalo Rodríguez | 6.27 |

| French Ligue 1 | Lyon | Samuel Umtiti | 5.79 |

| Spanish La Liga | Real Madrid | Pepe | 5.52 |

| Spanish La Liga | Barcelona | Gerard Piqué | 5.24 |

| German Bundesliga | FC Bayern München | Jerome Boateng | 5.20 |

| Spanish La Liga | Barcelona | Javier Mascherano | 5.20 |

| Spanish La Liga | Málaga | Raúl Albentosa | 5.19 |

| Italian Serie A | Roma | Kostas Manolas | 5.06 |

| Italian Serie A | Lazio | Wesley Hoedt | 4.99 |

| German Bundesliga | Borussia Dortmund | Sokratis | 4.94 |

| Italian Serie A | Fiorentina | Davide Astori | 4.91 |

| French Ligue 1 | Paris Saint-Germain | Thiago Silva | 4.86 |

| English Premier League | Liverpool | Martin Skrtel | 4.72 |

| English Premier League | Liverpool | Mamadou Sakho | 4.72 |

| Italian Serie A | Internazionale | Jeison Murillo | 4.71 |

| Italian Serie A | Milan | Alex | 4.66 |

| Italian Serie A | Juventus | Andrea Barzagli | 4.63 |

| French Ligue 1 | St Etienne | Loic Perrin | 4.59 |

| French Ligue 1 | GFC Ajaccio | Roderic Filippi | 4.57 |

| Italian Serie A | Juventus | Leonardo Bonucci | 4.49 |

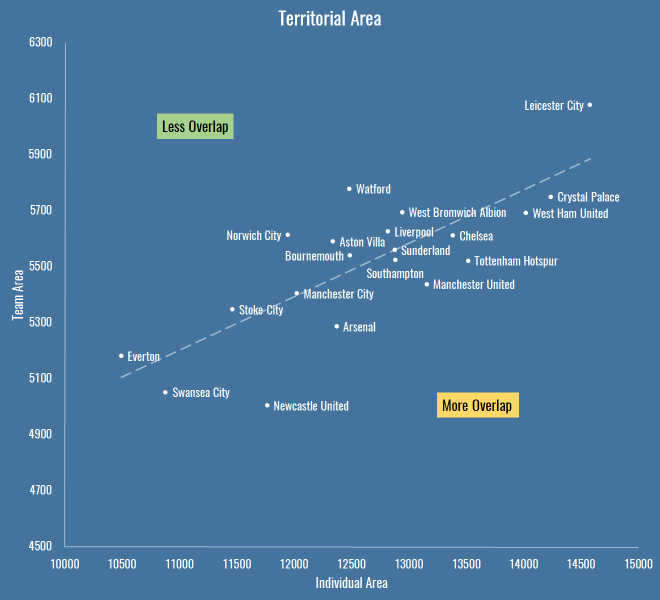

There are some clumps of teams here, so we should immediately be suspicious that we’re measuring team effects as much as player effects – PATCH currently doesn’t adjust for teams average scores, and for that matter nor does it score leagues differently. But these numbers are mostly defensible. It’s fun to note that Raúl Albentosa was a Derby County signing during Steve McClaren’s reign, and he’s recently been targeting Samuel Umtiti at Newcastle, so it’s nice to know I’ve built the perfect metric for him, even if you don’t buy it.

The same metric works for defensive and centre midfielders too:

| Competition | Team | Player | PATCH |

|---|---|---|---|

| Spanish La Liga | Barcelona | Sergio Busquets | 5.66 |

| French Ligue 1 | Lyon | Maxime Gonalons | 5.50 |

| Spanish La Liga | Barcelona | Ivan Rakitic | 5.21 |

| Italian Serie A | Fiorentina | Milan Badelj | 5.19 |

| Italian Serie A | Roma | Daniele De Rossi | 4.84 |

| French Ligue 1 | Lyon | Corentin Tolisso | 4.81 |

| Italian Serie A | Fiorentina | Borja Valero | 4.60 |

| Italian Serie A | Fiorentina | Matias Vecino | 4.48 |

| Spanish La Liga | Sevilla | Grzegorz Krychowiak | 4.45 |

| Italian Serie A | Lazio | Lucas Biglia | 4.31 |

| Italian Serie A | Internazionale | Felipe Melo | 4.30 |

| Spanish La Liga | Las Palmas | Vicente Gómez | 4.28 |

| English Premier League | Liverpool | Emre Can | 4.26 |

| Spanish La Liga | Sevilla | Steven N’Zonzi | 4.26 |

| German Bundesliga | Borussia Dortmund | Ilkay Gündogan | 4.26 |

| Italian Serie A | Roma | Radja Nainggolan | 4.25 |

| German Bundesliga | FC Bayern München | Xabi Alonso | 4.24 |

| German Bundesliga | FC Bayern München | Arturo Vidal | 4.11 |

| Spanish La Liga | Rayo Vallecano | Raúl Baena | 4.10 |

| English Premier League | Liverpool | Jordan Henderson | 4.09 |

Busquets on top, all is right in the world.

Prospects

We can already see some talented youngsters in the tables above, so let’s focus purely on players that were 23 or under at the start of this season. I’ve relaxed the minutes to 600, here’s the top 30, taken from all midfielders and defenders:

| Competition | Team | Player | Date of Birth | Minutes | PATCH |

|---|---|---|---|---|---|

| French Ligue 1 | Lyon | Samuel Umtiti | 14/11/1993 | 1852 | 5.79 |

| Italian Serie A | Lazio | Wesley Hoedt | 06/03/1994 | 1507 | 4.99 |

| French Ligue 1 | Lyon | Corentin Tolisso | 03/08/1994 | 2188 | 4.63 |

| German Bundesliga | FC Bayern München | Joshua Kimmich | 08/02/1995 | 727 | 4.54 |

| English Premier League | Liverpool | Emre Can | 12/01/1994 | 2054 | 4.44 |

| German Bundesliga | VfB Stuttgart | Timo Baumgartl | 04/03/1996 | 1287 | 4.30 |

| German Bundesliga | Bayer 04 Leverkusen | Jonathan Tah | 11/02/1996 | 2076 | 4.27 |

| French Ligue 1 | Lyon | Sergi Darder | 22/12/1993 | 696 | 4.15 |

| German Bundesliga | VfL Wolfsburg | Maximilian Arnold | 27/05/1994 | 877 | 4.10 |

| German Bundesliga | FC Bayern München | Kingsley Coman | 13/06/1996 | 895 | 3.98 |

| Spanish La Liga | Rayo Vallecano | Diego Llorente | 16/08/1993 | 2228 | 3.97 |

| French Ligue 1 | Paris Saint-Germain | Marquinhos | 14/05/1994 | 988 | 3.95 |

| Italian Serie A | Empoli | Federico Barba | 01/09/1993 | 927 | 3.93 |

| English Premier League | Tottenham Hotspur | Dele Alli | 11/04/1996 | 730 | 3.93 |

| English Premier League | Tottenham Hotspur | Eric Dier | 15/01/1994 | 2375 | 3.79 |

| English Premier League | Arsenal | Héctor Bellerín | 19/03/1995 | 2346 | 3.79 |

| Spanish La Liga | Real Sociedad | Aritz Elustondo | 11/01/1994 | 1643 | 3.68 |

| Spanish La Liga | Atlético de Madrid | Saúl Ñíguez | 21/11/1994 | 1337 | 3.66 |

| Italian Serie A | Lazio | Sergej Milinkovic-Savic | 27/02/1995 | 715 | 3.65 |

| French Ligue 1 | Monaco | Wallace | 14/10/1994 | 1654 | 3.63 |

| German Bundesliga | Borussia Dortmund | Matthias Ginter | 19/01/1994 | 1414 | 3.62 |

| Italian Serie A | Milan | Alessio Romagnoli | 12/01/1995 | 2102 | 3.62 |

| German Bundesliga | Borussia Dortmund | Julian Weigl | 08/09/1995 | 1575 | 3.59 |

| Italian Serie A | Lazio | Danilo Cataldi | 06/08/1994 | 1024 | 3.55 |

| Italian Serie A | Napoli | Elseid Hysaj | 02/02/1994 | 2279 | 3.52 |

| Spanish La Liga | Sevilla | Sebastián Cristóforo | 23/08/1993 | 614 | 3.51 |

| English Premier League | Chelsea | Kurt Zouma | 27/10/1994 | 1949 | 3.51 |

| French Ligue 1 | Nice | Olivier Boscagli | 18/11/1997 | 792 | 3.48 |

| Spanish La Liga | Getafe | Emiliano Velázquez | 30/04/1994 | 745 | 3.44 |

| Italian Serie A | Sampdoria | David Ivan | 26/02/1995 | 785 | 3.41 |

Note: the tables above filter to performances at centre-back or in midfield, if the values differ in this last table it’s because it considers their performances in a wider variety of positions.

So, cheer up Timo Baumgartl, PATCH doesn’t count mistakes. You’ll note again big clumps of players from the same teams (kudos, Lyon) – we know by now we’re probably measuring some systematic effects here. It’s also worth pointing out that if a young player is getting this level of minutes, in the big 5 leagues, they’re probably at a certain level without even looking at their numbers. But again, at first glance, this is a decent list.

Liverpool’s First Choice Centre-Backs

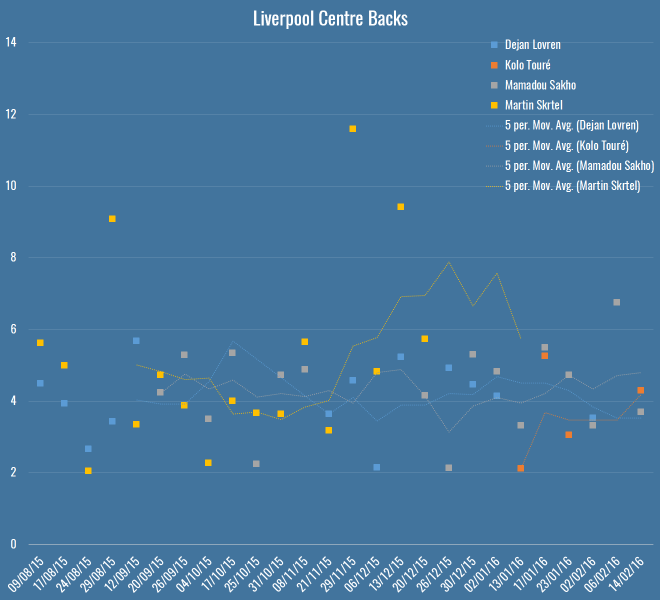

At the Opta Pro Forum I blurted out to the Liverpool contingent that my pet defensive metric quite liked their defending, to which they replied “ours doesn’t.” So I was a little crestfallen, but I’ll continue to talk myself out of a job here: Liverpool concede the second fewest shots in the league, so I’m right. They also have the worst save percentage in the league but nevertheless renewed Mignolet’s contract, so they’re wrong. QED. Let’s look more closely:

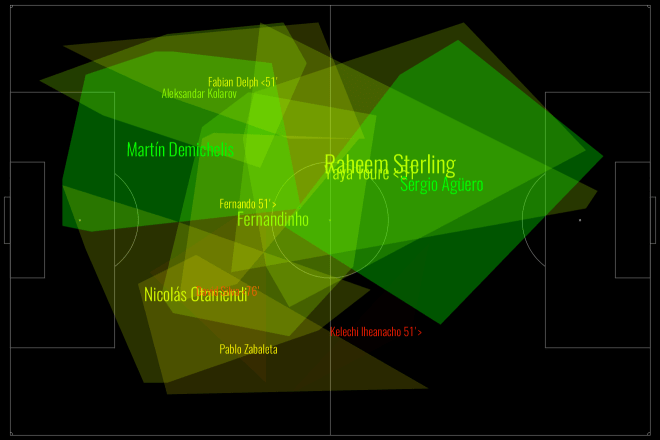

Here you’ve got all Liverpool’s games this season – Rodgers up to the fateful Merseryside derby on the 4th October, Klopp soon after. The markers show individual PATCH performances, and the lines are five-game moving averages (although Touré isn’t quite there yet). The average PATCH for EPL centre-backs is around 3.3, and you’ll note that Liverpool are regularly exceeding that. You can also see that Skrtel had some insane outliers, but maintained pretty good form for Klopp until his injury (which – if you’re to believe renowned fitness troll Raymond Verheijen – was inevitable). The fight for second place is closer, but even taking Lovren and Sakho side-by-side, I don’t believe you’re left with a terrible pairing.

So, I don’t believe Liverpool’s defence is terrible and I think they have a solid foundation both in defence and midfield for Klopp to build on over the next season. I do believe they’ve been unlucky, as James Yorke points out in this article on Statsbomb. It’s funny to compare Everton and Liverpool’s defences this season – they both sit on 36 goals conceded in the league. Tim Howard has taken a lot of heat this year for his performances, all while facing 4.7 shots on target a game – the fourth worst in the league. Mignolet’s been facing 3.3 – the second least. While Howard has now been unceremoniously dropped and is soon to be shipped off to the MLS, Mignolet gets his contract renewed. Sure, some of of this is luck and not entirely his fault, but I genuinely believe you should not lay the blame on Liverpool’s defence, there’s not a lot more they could do to make Mignolet’s life quieter.

Arsenal’s Midfield

Through injuries, sentimentality or pure stubbornness, it’s hard to tell if Wenger knows his best midfield this season. I asked on Twitter and a lively debate ensued, but excluding the lamentation of Wilshere believers, the most common answer was Coquelin & Cazorla, with some pondering ways to insert Ramsey into the mix. What does PATCH think, purely defensively, of their appearances in the centre of the field?

Okay, well, first thoughts are that this is a graph format looking to get retired early, but here you have the four players who have put reasonable minutes into Arsenal’s central midfield, with the markers again showing their PATCH values in each game week, and the lines again showing a five game moving average. The average PATCH for a midfielder in the EPL is basically the same as a defender, around 3.3. This graph seems to imply that Cazorla has very good and very average games, and similar could be said for Flamini. Ramsey doesn’t seem like anything special, but is pretty low-variance. Coquelin seemed to start the season very strongly, but was fairly average in the lead-up to his injury.

Let’s break it down more simply, here are the averages:

| Player | PATCH |

|---|---|

| Santiago Cazorla | 4.17 |

| Francis Coquelin | 3.83 |

| Mathieu Flamini | 3.79 |

| Aaron Ramsey | 3.60 |

So in black and white, we seem to more or less agree with Arsenal fans’ instincts.

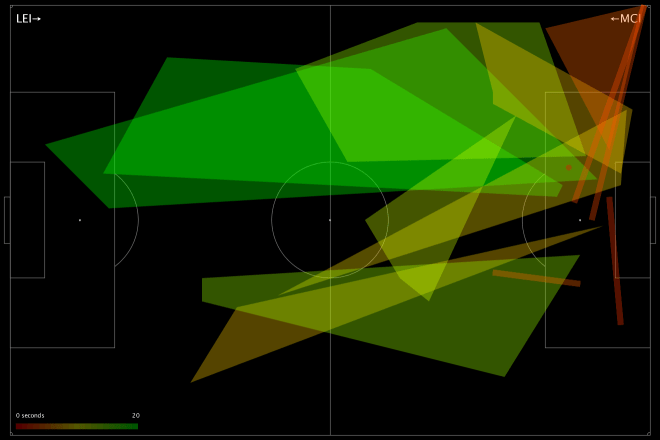

N’Golo Kanté

What of players whose defending we rave about but who don’t make an appearance high up the PATCH ratings? N’Golo Kanté is way down the list, with a very middling 3.12. What’s happened there? Well, let me reiterate that PATCH measures territory and ball progression, nothing else. As I mentioned on the Analytics FC podcast recently, not all ball progression is bad. Much has been made of Leicester’s “bend, don’t break” defensive scheme this season – they give up territory but their low block often makes up for it, this means their midfield isn’t likely to score highly for repelling the opponent. Kanté himself regularly relies on pace and last ditch tackles (and he is an excellent tackler) to retrieve the ball once it’s in his territory, but if a pass has been completed in that territory, PATCH has already given him a demerit.

So… PATCH is useless because it misses demonstrably good players? Well, I’m not sure I’d go as far as calling Leicester’s defence bad, but it’s certainly well below par for a league leader, as Tim at 7amkickoff recently analysed. That said, I’ll admit I’m a little uncomfortable. I’ve said elsewhere, the essence of PATCH, or really any defensive metric, is this:

- Whose fault is it?

- How bad is it?

In PATCH, the whose fault part is calculated by territory (and there are lots of ways to do this better) and the how bad bit is done through ball progression. Alternatives to the second might pick Kanté up better – how many moves enter his territory, but never leave? That would be an interesting one to look at, and something I’ll explore soon.

For now, let’s just say that he’s valuable for Leicester inasmuch as his defensive skills turn into attacks very effectively, because it’s Leicester’s attack (and let’s face it, their luck) that is powering their title challenge, and not necessarily their defence. And that, dear reader, is another thing that PATCH doesn’t measure in defenders.

Conclusion

Hopefully if you’ve got this far, you believe there’s value in something like PATCH as a way of measuring defenders. It’s certainly entangled with teams’ systematic effects, and we suspect it has some false negatives. I don’t think looking at these outputs that there are tons of false positives however, but then Flamini rears his head so who knows.

I’m constantly working on PATCH, so I’d love to hear your ideas for places it might fall down, or things you’d like to see it applied to. To that end, I’ve bunged PATCH values for all EPL performances this season on Github. This file contains:

- Team

- Opposition

- Match date

- Player

- Date of Birth

- Nationality

- Starting lineup X position

- Starting lineup Y position

- Minutes played

- PATCH

Play, critique, ignore, do what you will. I’ll see if I can get to the point where these are updated for a all players in a bunch of leagues every week, but right now I can’t guarantee the scoring is going to be at all stable with all the changes I’m making.